You could argue that if there were one single thing that you would expect PMOs to be good at, it would be Metrics. After all, PMOs often exalt the mantra that ‘the PMO is the single voice of truth!’. But while most PMO analysts could tell you that CPI = EV/AC and ROI = (Gain-Cost)/Cost, analysts seem to be far less savvy about the metrics that are important in agile environments. Well, never fear – we’ve pulled together the definitive collection of Agile Metrics for your performance-indicating pleasure.

Below, you will find details of agile metrics used to track flow, quality, and even satisfaction. In short, this page contains everything you need to know about agile metrics. Whether your teams are using Scrum, Kanban, or another agile framework, you’ve come to the right place.

What are Agile Metrics used for?

If you are a PMO person and are looking at Agile teams, it may be tempting to start pulling data to begin to assess and compare teams. But what you will discover as you read down this page is that it is not easy to compare groups using agile metrics. A piece of work that is ‘1 story point’ for one team may well be ‘3 story points’ for another. A team that is completing 40 story points per week is not necessarily better than a team completing ten story points per week. This is because the definition of a story point is one that is unique to the team. Other attempts to measure productivity can also backfire. It used to be common to measure lines of code written. But when we use it as a performance or productivity metric, we quickly see a decline in the quality of code. Quantity begins to win out over quality, which is the exact opposite of what we would want to achieve.

With agile teams, we are looking to satisfy customers through the continuous delivery of valuable software. That isn’t something I’ve just made up – it is lifted from the 12 principles behind the Agile Manifesto. In fact, the principles behind the Agile Manifesto make several statements translate into measures. Let’s take a look at some of those principles:

The principles behind the Agile Manifesto for Software Delivery

- Deliver working software frequently, from a couple of weeks to a couple of months, with a preference to the shorter timescale. Agile teams should be delivering software regularly. When the Agile Manifesto was written, the idea of delivering every couple of weeks seemed radical, but many software teams are now releasing software to production multiple times per day.

- Business people and developers must work together daily throughout the project. Collaboration is vital for agile teams. If the development team is not engaged closely with the business, then they are less likely to deliver software that lives up to expectations. This engagement can be measured easily by observing attendance and participation at meetings and ceremonies, such as sprint reviews and showcases.

- Build projects around motivated individuals. Give them the environment and support they need, and trust them to get the job done. How motivated are your people? There are many ways of assessing this, but the simplest way is often simply to ask the team. If you have a good trust relationship, they will usually tell you.

- Working software is the primary measure of progress. Rather than focusing on stage gates and other milestones, the primary rule is the delivery of software that actually does the job.

- Agile processes promote sustainable development. The sponsors, developers, and users should be able to maintain a constant pace indefinitely. We talked about motivated individuals earlier – we also want them to work sustainably. Teams who are coming in early, leaving late, and working weekends are likely to burn-out and are not adhering to the agile principles. Ensuring the teams are maintaining a constant and sustainable pace is a crucial measure within agile.

- Continuous attention to technical excellence and good design enhances agility. Working valuable software that is sustainable means no shortcuts. It means code quality has to be good, and software is built in line with best practices for security and readability. Quality metrics are an essential consideration with agile approaches.

- At regular intervals, the team reflects on how to become more effective, then tunes and adjusts its behavior accordingly. Teams expect to improve continuously, and this can also be measured. Are the team holding regular retrospectives? Are they taking steps to improve themselves?

We asked ourselves the question ‘What are Agile Metrics for?’ but there is another crucial question:

Who are Agile Metrics for?

Agile teams are, to a greater or lesser degree, self-organizing. To tune and adjust behavior, they need to understand how they are doing. This is where metrics come in! Agile metrics are primarily a feedback loop for the team. Teams should regularly review Agile metrics, reflect and use the data to become more productive. Often the metrics are used as a starting point for a conversation. Imagine our metrics are showing that cycle time has worsened. The first step is for the team to understand why that is. It could be due to a unique, one-off event – that will right itself a few cycles down the line. Or it could be an indication that backlog grooming is not being performed adequately. Whatever the reason, the metrics are the indicator, and it is the team who reflect and diagnose.

Care should be taken when adding agile metrics into reports without commentary attached. Without explanation, assumptions can be made that can lead to incorrect diagnoses. And just like in medicine, a misdiagnosis can have serious consequences.

How should Agile Metrics be used?

Agile metrics should help achieve our goal of satisfying customers through the continuous delivery of valuable software. Once metrics are gathered, the first thing to do is reflect on what the data are telling the team. Coaches and PMO teams can often assist with calculating metrics, facilitating the discussion, and by helping teams identify possible root causes.

Once the metrics have been reflected on and put into context, they can be used to help teams (and those who support them) tune their approach and become more productive.

The best way of doing this is by undertaking experiments based on a hypothesis. For example, a team may decide to implement WIP limits (limiting work in progress), working off the premise that doing so will improve cycle time and result in satisfying customers faster.

The supporting teams, including the PMO, Managers, and Coaches, should be looking for opportunities to support the teams and help improve their metrics where possible. Sometimes this may mean reducing the work going into a team. Other times, investment in software for load testing may be the right answer. Skillset and mindset issues can be resolved through training and mentoring and coaching, or through moving people around. By taking this servant-leader approach, they maximize the chances of the team satisfying customers through the continuous delivery of valuable software.

Agile Metrics

I’ve grouped the metrics into three sections. Those that relate to the pace of delivery and value delivered are in the first section, followed by KPIs for quality, followed by metrics used to measure satisfaction.

Pace and Value

STORIES COMMITTED vs. COMPLETED

Calculate the team’s ability to predict its capabilities. Divide the number of stories completed by the number of stories committed. Team review variance from 100%. During a sprint, progress us usually tracked using a burndown chart – check out our handy free Excel burndown chart here.

VELOCITY

Velocity determines the regular run-rate for a Scrum team. Teams usually strive for stable, predictable velocity, but may challenge themselves to increase Velocity through efficiencies and automation. Calculate by comparing story points completed in the current sprint with points completed in the previous sprint.

ITERATION COMPLETE to PRODUCTION

Ideally, every iteration should result in a push to production. But how often is this happening, and how long does this take? Track elapsed hours/days from the end of the iteration to the time that the code is in production. If there are wild variances, you may decide to track a rolling average.

RELEASE FREQUENCY

One of the aims of Agile teams is to deliver in short cycles. Measuring release frequency

VALUE DELIVERED

This metric requires Product Managers to assign a value to each story – either using a points system or using dollars. A downward trend in this metric could indicate that lower value features are being implemented and that it is time to stop developing the product.

CYCLE TIME

Cycle time is a measure of the total time it takes for individual issues to get from “in progress” to “done.” Teams with shorter cycle times are likely to have higher throughput, and teams with consistent cycle times across many issues are more predictable in delivering work. This is a popular measure on Kanban teams who will typically plot cycle time on a control chart.

LEAD TIME

The Lead time is the time from the moment when the request was made and placed on a board, to when all work on this item is completed and delivered. So it’s the total time waiting for a requirement to be delivered into production.

WIP (WORK IN PROGRESS)

Work in progress is something that Lean practitioners consider a form of waste. Lots of work in progress can mean the team is switching focus constantly and may be working as a group of individuals rather than working as a team. Teams should agree on WIP limits and focus on helping members of the team who get blocked rather than starting new tasks while one of their team struggles.

Lead time and Cycle time

Quality

# DEFECTS / DEFECT DENSITY

Track Defects during the iteration (Defect Density), and track Defects in production as discrete metrics.

# NEW UNIT TESTS AUTOMATED

Track the number of new unit tests automated in each iteration. This metric can be tracked either as an integer or as a factor of the total number of new unit tests created.

% AUTOMATED TEST COVERAGE

A measure of how much of the code base is covered by automated tests. Automated testing is a pre-requisite to continuous delivery and reduces the amount of time required to release working software.

#DEFECTS DEFERRED

Defects that are identified but deferred to a subsequent iteration are a form of technical debt. Spikes in this metric or a steady climb would warrant further analysis.

Satisfaction

#CUSTOMER SATISFACTION

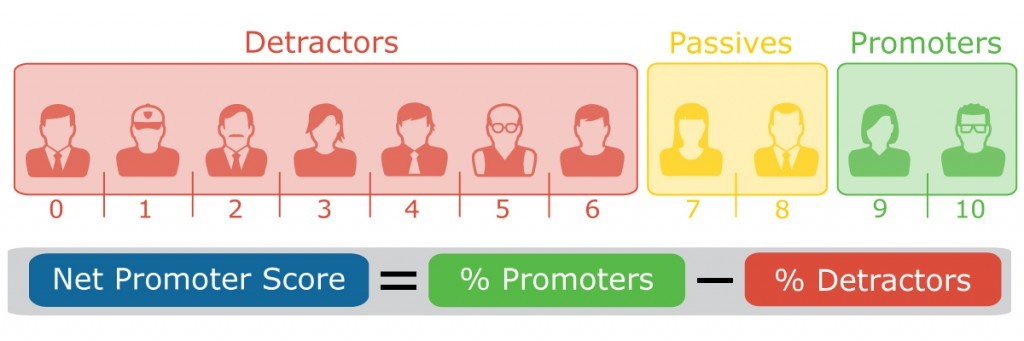

At the team level, this can be done on a ‘per iteration’ basis, using a 1-5 scale or smiley face indicators at Iteration Demos. At an enterprise level, Net Promoter Scores may be used.

#TEAM HAPPINESS

This team can be done on a ‘per iteration’ basis, using a 1-5 scale or smiley face indicators at retrospectives. Individuals can assess either their own happiness or make a subjective assessment of overall team happiness for discussion.

NET PROMOTER SCORE

NPV is an index ranging from -100 to 100 that measures the willingness of customers to recommend a company’s products or services to others. It is used as a proxy for gauging the customer’s overall satisfaction with a company’s product or service and the customer’s loyalty to the brand.

Have we missed your favorite Agile Metric? Let us know in the comments box below, and we’ll add it to the list.